Your AI Can Be Switched Off

Your AI Can Be Switched Off

Imagine this.

One morning, your electricity provider decides you are not using electricity in the “right” way.

So they cut your access.

No lights. No Wi-Fi. No fridge. No laptop charger. Nothing.

Would that be acceptable?

Of course not.

Electricity is too fundamental. Once a society depends on it, cutting people off because a provider disapproves of how they use it starts to look absurd, dangerous, and far too centralized.

Now replace electricity with intelligence.

That is the world we are quietly building with cloud AI.

Millions of people now rely on AI models every day to work, search, write, think, summarize, code, and solve ordinary problems. But unlike electricity, that intelligence usually does not belong to the user. It is rented from a provider.

And rented intelligence comes with a catch.

It can be rate limited. It can be changed. It can be censored. It can become more expensive. It can disappear behind a new plan. It can stop working when servers go down. And yes, it can be switched off.

If AI is becoming part of how people think and work, this should worry everyone.

Try Fenn if you want private AI that finds any file on your Mac, without sending your work to the cloud.

The real problem is dependency

The issue is not that OpenAI or Anthropic make useful products. They clearly do.

The issue is the structure.

When your workflow depends on a cloud model, you are not building on software you own. You are building on permission.

That means a provider can decide:

which use cases are allowed

which outputs are acceptable

which accounts stay active

which model version you get

how much you pay to keep access

That is not ownership. That is dependency.

It is the difference between owning a tool and renting one from a company that can change the terms whenever the relationship becomes inconvenient.

We already covered that trust problem more directly in Can you trust OpenAI? and Can you trust Anthropic (Claude)?.

Why this matters more than people think

A lot of people still treat AI like a fun website.

But for many professionals, it is already much more than that.

AI is becoming part of everyday work:

finding the right clause inside a long contract

locating the exact slide in an old deck

searching across screenshots, PDFs, recordings, and notes

summarizing a folder before a meeting

checking whether a receipt, invoice, or report matches specific criteria

Once a tool becomes part of your real workflow, losing access is not a small inconvenience.

It is a productivity outage.

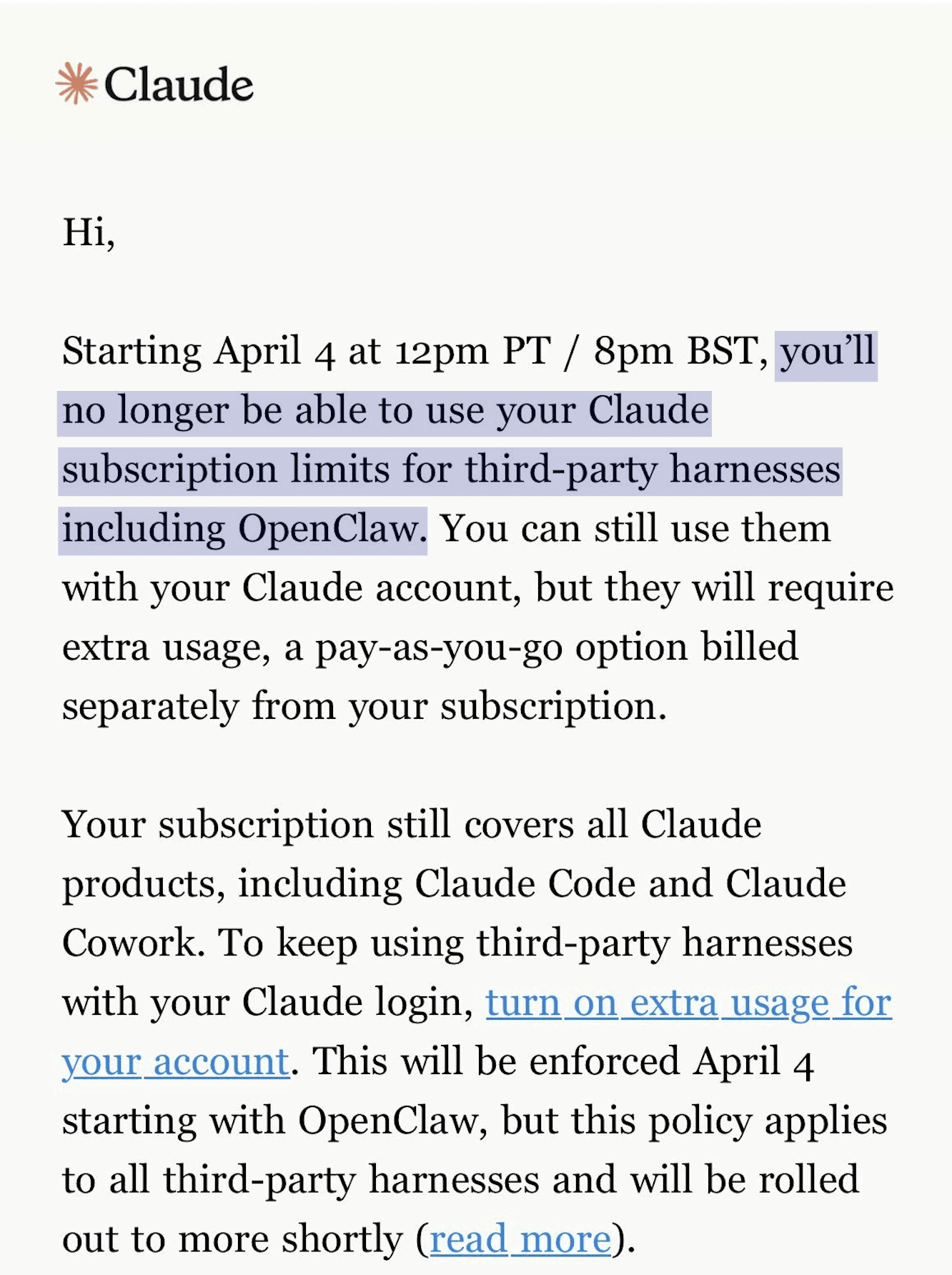

And this is not fiction. Anthropic just cut off the project OpenClaw to use Claude models.

That is why cloud-only AI is a fragile foundation. We made a related point in Your ChatGPT Backup Plan: Offline AI on Your Mac.

Open source models change the power balance

This is why open source models matter so much.

Once a model is on your machine, it is no longer just a remote service you borrow from a company. It becomes part of your own computing environment.

That changes everything.

No cloud provider can suddenly remove your access to the model on your own Mac.

No provider has to approve each use case before you can think with it.

No provider needs your private files to leave your machine just so you can search, classify, summarize, or ask questions about them.

And no professional should have to wonder whether confidential work is becoming raw material for someone else’s system.

This is the deeper value of open models. Not only cost. Not only performance.

Freedom.

Freedom to keep working. Freedom to stay private. Freedom to build workflows that do not disappear when someone else changes a policy, pricing tier, or product direction.

We wrote more about that here: Why Open Source AI Always Wins: Fenn and the Future of AI on your Mac.

Privacy is freedom

People often talk about privacy like it is a secondary feature.

A nice extra. A preference. A checkbox.

It is not.

Privacy is freedom.

If your work has to be sent somewhere else before you can use AI, then you are always accepting a trade.

Maybe that trade is worth it sometimes. But it should not be the default for everything.

Especially not for:

client files

financial records

legal documents

personal archives

research material

internal company knowledge

You do not need to send your data anywhere just to enjoy AI.

That is the old assumption. It is no longer true.

Why Fenn is built on open models, not cloud dependency

This is exactly why Fenn is built on top of open source models, not on top of closed cloud models like GPT or Claude.

Fenn is Private AI that finds any file on your Mac.

That promise only makes sense if the intelligence stays under your control.

With Fenn, everything stays on your Mac.

Your files are not sent to a cloud provider just so you can search them. Your confidential work does not leave your machine. The models run locally. The product works offline. And the system is designed around the idea that your private knowledge should remain yours.

That is not just a privacy benefit. It is a product decision based on independence.

What that lets you do in practice

Because Fenn runs privately on your Mac, it can help you do real work on your own data without cloud exposure.

With Fenn, you can:

search inside documents, PDFs, images, screenshots, audio, video, Photoshop files, InDesign files, PowerPoint files, and more

open the exact page, frame, or timestamp instead of just getting a filename

run more complex searches that would normally require hours of manual checking

chat with your files 100% privately

extract transcription from audio and video

rename files with AI

search files by similarity

This is where local AI stops being an ideology and becomes a practical advantage.

You are not giving up capability in exchange for privacy.

You are getting both.

A simple example

Imagine you need to find all your receipts from Restaurant X above $100.

Without the right tool, that means opening PDFs one by one, checking screenshots, maybe searching email attachments, maybe looking through scans, maybe guessing filenames, maybe wasting an hour.

With Fenn, that kind of query becomes natural.

You ask for the receipts from Restaurant X above $100, and Fenn can search across your indexed files privately on your Mac, then take you straight to the documents that match.

That is the difference between a file search app and an actual intelligence layer for your own machine.

Why the Mac is the right place for this

The good news is that Macs with Apple silicon are surprisingly good at running AI locally.

That makes this whole shift possible.

You no longer need a giant cloud stack just to get useful AI on personal and professional workflows. A modern Mac can do far more on device than most people realize.

That is why private AI on macOS feels less like a futuristic idea now, and more like the obvious next step.

The future should not belong to permissioned intelligence

The biggest long-term risk in AI is not only model quality.

It is control.

Do we want a future where intelligence lives behind a few remote gates, controlled by providers who can decide what remains available, what gets refused, and what kind of work is allowed?

Or do we want a future where people can run useful models on their own machines, with their own files, under their own control?

That is the real choice.

If electricity became essential, society did not accept that it should only exist as a revocable privilege for approved users.

As AI becomes essential, we should not accept that model either.

The bottom line

Your AI can be switched off if it belongs to someone else.

That is why open source models matter. That is why local AI matters. And that is why privacy matters far beyond marketing language.

With Fenn, the model runs on your Mac. Your data stays on your Mac. Your confidential work stays yours.

Because AI should help you think and find answers, not make you dependent on a remote gatekeeper.

Download Fenn and find the moment, not the file.