Siri Could Soon Run on Google Servers

Siri Could Soon Run on Google Servers

Apple’s long-awaited AI upgrade for Siri might rely more heavily on Google than initially expected.

A new report suggests that Apple has asked Google to help set up servers for the upcoming Gemini-powered Siri, raising fresh questions about where user data could be processed in the future.

For a company that has spent years marketing privacy as a core differentiator, the possibility that Siri requests could run on Google infrastructure is notable.

The original plan: Apple devices and Apple servers

When Apple first announced the next generation of Siri and Apple Intelligence, the company emphasized a hybrid approach:

On-device AI for many tasks

Private Cloud Compute (PCC) for more complex requests

Private Cloud Compute is Apple’s own cloud infrastructure designed to extend the capabilities of on-device models while maintaining Apple’s privacy guarantees.

At the time, Apple said that its new AI features would continue to run on Apple devices and Apple’s own cloud infrastructure.

The new report: Google servers may play a bigger role

According to reporting from The Information and summarized by The Verge, Apple has asked Google to explore setting up servers to support the Gemini-powered Siri.

This builds on Apple’s January announcement that Google’s Gemini models would power the next generation of Apple Foundation Models used in Siri and other Apple Intelligence features.

However, the initial announcement left one key detail unclear:

Where exactly would those models run?

Apple highlighted on-device AI and Private Cloud Compute, but did not explicitly confirm whether some workloads could run on Google’s infrastructure.

The new report suggests that Google’s cloud may be involved more directly than previously assumed.

Why Apple might rely on Google infrastructure

The reason is likely simple: AI infrastructure is extremely expensive and complex to scale.

Companies like:

Google

Microsoft

Amazon

have spent years building massive global data center networks to support AI workloads.

Apple, by comparison, has historically invested less aggressively in large-scale cloud infrastructure.

According to The Information, only about 10% of Apple’s Private Cloud Compute capacity is currently used on average, which suggests Apple is still experimenting with how its AI architecture should scale.

If Apple wants to deploy advanced AI quickly to billions of devices, relying on Google’s infrastructure could accelerate that process.

What this means for privacy

Apple has built its brand around the idea that your data stays private.

If Siri requests start running on Google infrastructure, the natural question becomes:

How much data leaves your device, and where does it go?

Apple could still maintain strict controls.

Possible scenarios include:

requests processed on Google hardware but under Apple control

encrypted requests that Google cannot inspect

strict data deletion policies

But until Apple clarifies the architecture, the exact privacy model remains unclear.

The bigger issue: cloud AI vs local AI

This situation highlights a broader shift happening across the tech industry.

Most modern AI assistants depend on large cloud models running in massive data centers.

That creates a fundamental tradeoff:

cloud AI offers powerful models

but it requires sending requests and sometimes documents to external servers

For casual questions, that tradeoff may be fine.

For professional work, legal documents, research material, or confidential files, many users prefer a different model.

A different approach: AI that runs locally

Instead of sending files to cloud AI providers, some workflows keep AI directly on the device.

On Mac, this is increasingly possible thanks to Apple Silicon.

Tools like Fenn focus on that approach.

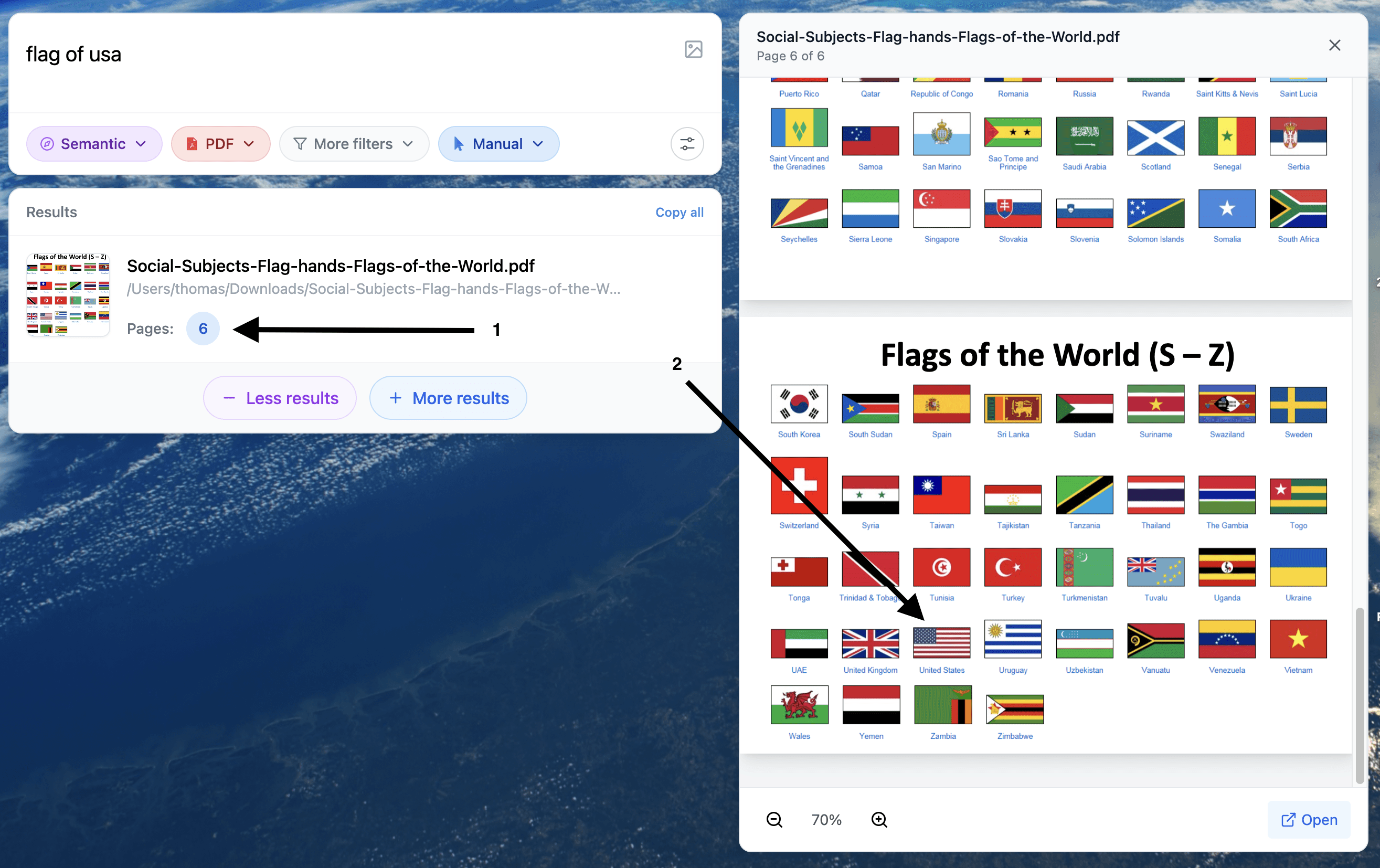

Fenn is Private AI that finds any file on your Mac, allowing you to:

search inside PDFs, documents, slides, screenshots, and recordings

jump directly to the exact page, slide, or timestamp

extract information from files using AI

All without sending your files to external AI providers like OpenAI, Google, or Anthropic.

Example of a search for a visual element in a pdf

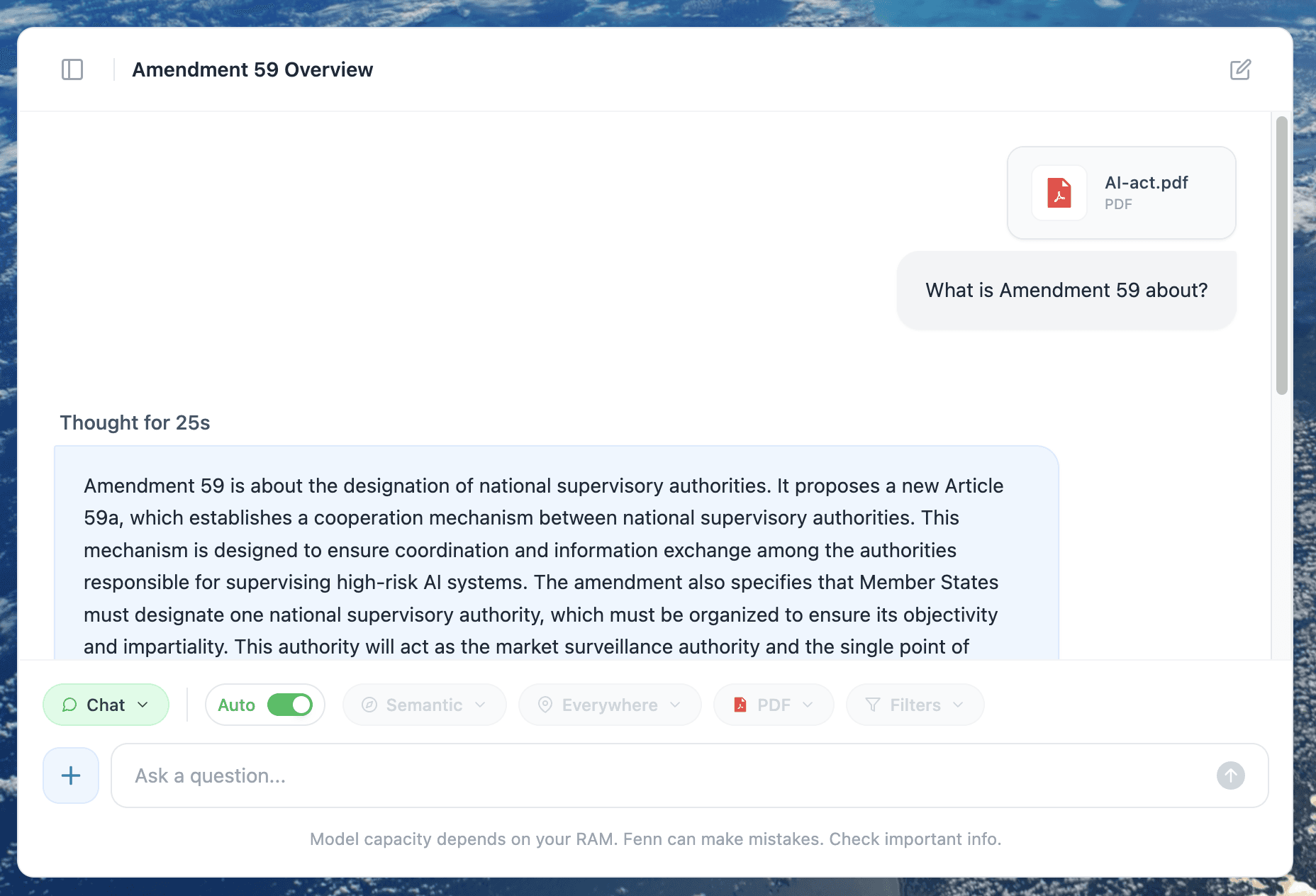

After finding a file you can chat with it 100% privately. Your files never leave your Mac.

The bottom line

The next generation of Siri may rely more heavily on Google infrastructure than Apple originally implied.

That doesn’t automatically mean your privacy is compromised.

But it does show how difficult it is for even Apple to build modern AI systems without relying on massive cloud infrastructure.

And for users who prefer keeping their data fully local, it’s a reminder that not every AI workflow needs to depend on the cloud.